The uniformity measure

Measuring entropy without the logarithms : An alternative to Shannon Entropy designed for the Neuralink Compression Challenge

Sometimes a challenge is so easy that it stirs disbelief. At first glance, Neuralink’s Compression Challenge appears quite tame: beat ZIP at compressing 741 audio files. However, beneath the surface lies an interesting conclusion: Neuralink’s brain data is so random that their (hilariously well-compensated) programmers sought assistance from ✨internet math autists✨.

Fun Facts

⦾ I asked Mark Adler, the co-author of the Gzip compressor, a silly question. He gave me a silly answer.

⦾ Only 38% of the population is college educated so I'll avoid math proofs entirely.

Introduction

Fileforma designs custom file formats for internet companies. We aim to deliver the smallest file sizes and the equation below guides us.

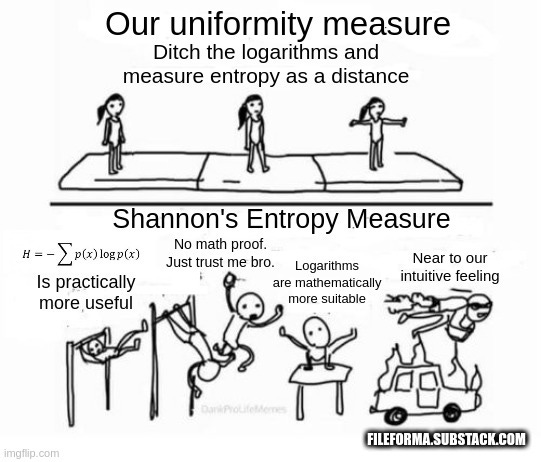

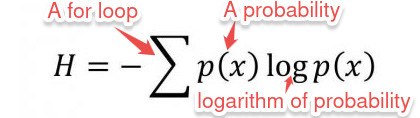

This equation features prominently in Claude Shannon's, A Mathematical Theory of Communication. Shannon's equation is a logarithmic measure of entropy.

I assume you can define Entropy. If you can’t, perhaps this article isn’t written for you.

Shannon does NOT provide a proof

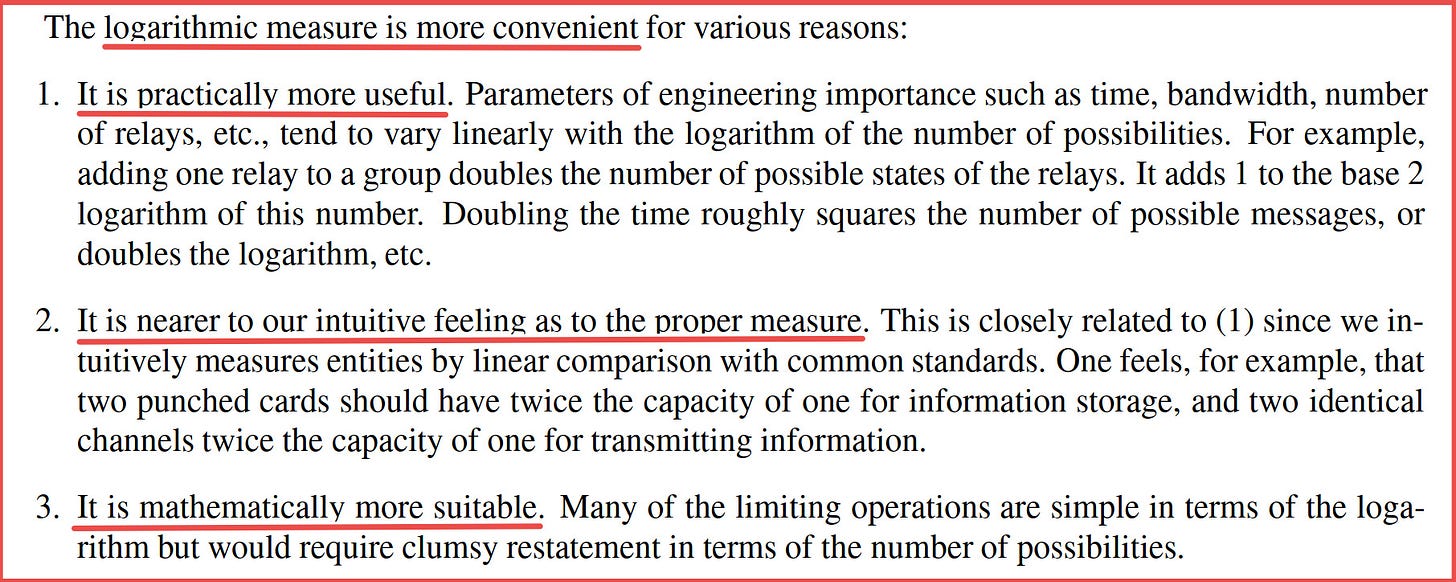

Here’s the problem. In his canonical paper, A Mathematical Theory of Communication, Claude Shannon fails to provide a proof for his use of logarithms to measure entropy. He argues that logarithms are ‘less clumsy’ and ‘simpler to use’ among alternative entropy measures.

Shannon attempts to convince us that logarithms are best way to measure entropy.

Here’s the problem.

Convincing arguments are not mathematical proofs.

Our Proposal : Uniformity as an entropy measure

We propose the uniformity measure. A ratio to determines a sample's proximity to the uniform distribution.

One calculates the uniformity measure using this algorithm:

1. Take a sample and count the total number of observations.

2. Count the number of unique observations in your sample.

3. Divide the size of your sample by the count of unique observations.Example

Let's measure the uniformity of this sample { 3, 255, 202, 5,202 }

1. Let's count n, the total number of observations in our sample. We get n = 5.

2. Next, we count uniqueObservations, the number of unique observations in our sample. Since 202 is repeated, we have uniqueObservations = 4.

3. Finally, let's divide the number of unique observations by total number of observations. We get 4 / 5 = 0.8The uniformity measure for the list { 3, 255, 202, 5,202 } equals 0.8.

In our next article. We write a winning solution for the Neuralink compression challenge. Get updates on Twitter.

Do you want us to write a custom file format for your company? Reach out murage@fileforma.com